You can tell a lot about a person from their social media profiles. After just a few minutes reading their hot takes on Twitter, admiring their Clarendon-filtered selfies on Instagram, and comparing the differences between their professional portraits on LinkedIn and their rowdy AfrikaBurn videos on Facebook, you can paint a fairly clear picture of the person’s age, race, gender, sexual preferences, medical history and religious and political views. Legally, of course, almost none of that information can or should be used if they’re applying for a job at your company and you’re trying to decide whether or not to call them in for an interview.

Yet it is. Of course it is. ‘Screening social media allows employers to look inside a person’s head to see who a candidate really is,’ according to Les Rosen, CEO of California-based background screening firm Employment Screening Resources, who was speaking at the recent Society for Human Resource Management 2018 Talent Conference & Exposition in Las Vegas. ‘But if you use it incorrectly, there’s a world of privacy and discrimination problems that could arise.’

Rosen warned conference delegates about the dangers of TMI: too much information. ‘[It] means you are looking at that applicant, and by looking at their social media site or perhaps a photo or something that they have blogged about, you are going to learn all sorts of things as an employer you don’t want to know and [that] legally cannot be the basis of a decision,’ said Rosen.

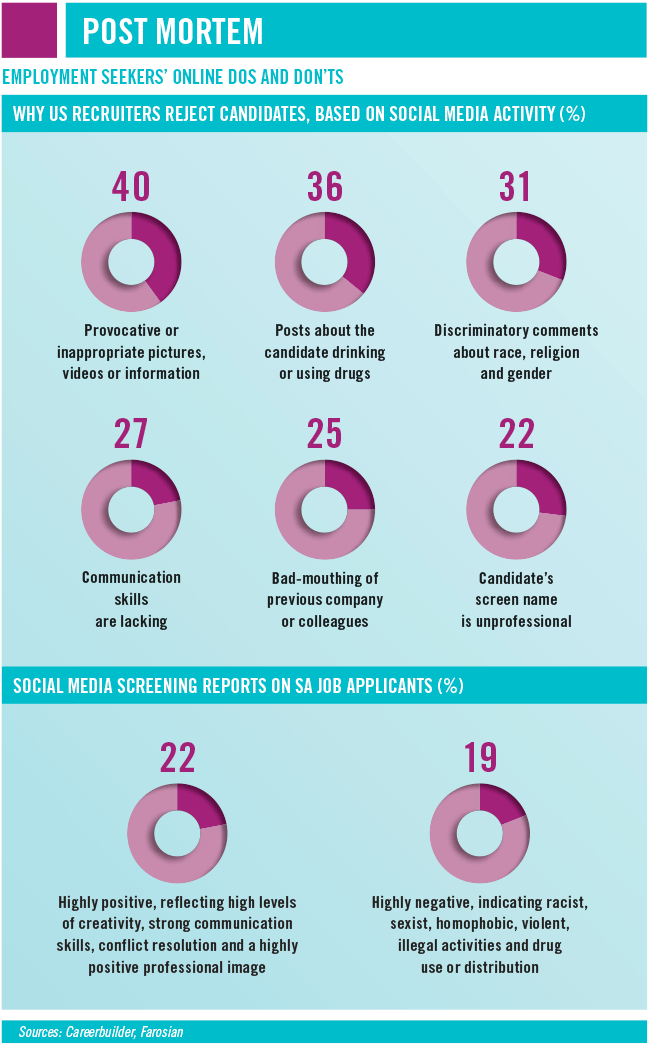

Farhad Bhyat, CEO of Johannesburg-based social media company Farosian, agrees. ‘If you do the social media screening yourself, you’re virtually 100% guaranteed that some form of discrimination will take place,’ he says. ‘It could be based on the candidate’s pregnancy status, sexual orientation, religious beliefs, political beliefs, which soccer team they support… There are literally hundreds of aspects of why or how a person could be discriminated against. And when that discrimination does take place, there is no liability limitation for that organisation if protected information has been accessed. You cannot just claim, “I didn’t know…” or “I didn’t see it”, because if it can be proven that you did access those accounts, and that information is available in the public domain, you will need to prove that it was never seen. Once it’s seen it can never be unseen.’

That’s why a growing number of companies are using automated AI-powered social media screening processes to gather information on job candidates, compiling reports on the candidate without their knowledge but – given that it’s all based on information the individual themself has made public – with their tacit permission. Background checks, of course, are standard HR operating procedure. Recruiters routinely vet applicants via police clearances, academic checks, ID checks, reference checks and copies of official certifications… But as the hirer, it’s almost impossible to resist the temptation to quietly log on and check out the person’s Facebook profile as well, especially when the cost of a disastrous hire can be so high. (Although it’s impossible to quantify the cost and inconvenience of hiring, firing and hiring again, Zappos CEO Tony Hsieh once estimated that bad appointments had cost the global online clothing retailer more than $100 million.)

So, is social media screening necessary? Sure. But is it ethical – or, for that matter, legal? To the latter, Bhyat answers: ‘Yes and no. It is dependent on the process that is being used, while also being linked to the “who” is conducting this. When social media screening is conducted on a person and only public domain content and information is assessed, then yes, this is legal. Secondly, this is further legitimised when the screening is being conducted by an independent third party, as it eliminates potential discrimination and the accessing of protected information.’ If the screening is done by a person within the organisation – say, for instance, the team leader/manager who’ll ultimately make the hiring call – then the risk of possible litigation on the grounds of discrimination increases.

Farosian is among a growing legion of international consultancies that offer third-party social media background checks, for exactly those reasons. ‘Our system is impartial, objective and unbiased,’ says Bhyat. ‘Every job applicant is assessed according to the exact same criteria, regardless of what country they live in, what country they were born in, their race, gender, sexual orientation, age… It doesn’t matter. The system automatically excludes any protected information to ensure that only the relevant information is delivered back to the person who requested the report.’

That report will include a risk assessment and a value culture match between the candidate and the hiring organisation, based on the applicant’s public social media activity (including likes, interests, and so on). The system combines impersonal AI and algorithms, and an impartial human touch. ‘We’re building a platform that has a massive new AI, machine-learning engine with facial recognition software integrated into it, but there is still a human element,’ says Bhyat. ‘AI and machine learning are not yet at the point where they can assess subtle differences in text, wording and imagery.’

Information (or ‘content’) about the candidate is extracted via the system’s automated process, then categorised and scored as positive, neutral or negative. A human then assesses and situationally analyses the information. ‘If you’re doing the search yourself, you could find 50 pictures of your candidate blackout drunk,’ says Bhyat. ‘Our report doesn’t include imagery because it’s not an indicator of how well the person can or cannot do their job. Unless it impacts their professional image or performance, it’s not valid. It means nothing.’

A similar rule is applied to social media posts. ‘You need to look at the intent behind the post,’ says Bhyat. ‘If it is negative, was it intended as humour but interpreted as sensitive content? That happens in about 70% of negative content cases, where the person unknowingly posted something offensive, and the intention was not the interpretation. When you see a history of negative content, then you know the intention is negative – whether that’s racist, sexist, homophobic or whatever the case may be. But when you see that there was one potentially homophobic post out of 12 years of Facebook history, the odds are very high that it was unintentional or had a different meaning behind it.’ So, as cold and impersonal as third-party social media screening may be, at least it’s more forgiving than most online trolls.

While the Farosian system – and others like it used around the developed world – does the same social media screening and Facebook stalking that prospective employers are itching to do, it does so far more thoroughly, and within the bounds of the law. ‘Finding a person’s social media account is actually very difficult these days, especially with the post-millennial generation,’ says Bhyat. ‘That generation is decreasing its Facebook usage, and very few of them go by their real names on Twitter or Instagram. If you were to search for my name on Instagram or Twitter, you’ll find me easily. Them? Not so much. They use weird and wonderful nicknames or names that have nothing to do with their ID book. Our algorithm uses a very specific methodology to accurately match the candidate’s personal profile. Some people also have secret Twitter or Facebook accounts. We find those.’

That may sound attractive to hiring companies, but for many job candidates it’s creepy and invasive. A study conducted in 2013 by North Carolina State University asked participants to imagine a hypothetical scenario in which a prospective employer had reviewed their Facebook profiles for professionalism. Half of the participants were asked how they would respond if they had gotten the hypothetical job, while the other half were asked how they’d respond if they hadn’t. The job offer made little difference, the study authors found, ‘with 60% of participants in both groups reporting a negative view of the potential employer due to a sense of having their privacy violated. Further, 59% of participants said they were significantly more likely than a control group that wasn’t screened to take legal action against the company for invasion of privacy’.

Six years later, in the post-Cambridge Analytica age of the General Data Protection Regulation, companies ignore that study at their peril. Then again, if the individual’s information is out there, willingly posted and shared with the entire internet, and it could provide make-or-break information about your prospective new employee, can you afford to not take a look?